On the Limits of LLM Reasoning: When Accuracy Is Not Enough

Accuracy vs. reasoning robustness in large language models.

Accuracy vs. reasoning robustness in large language models.Do large language models truly reason, or do they mainly recall memorized patterns?

In our recent paper published in IEEE Access, we investigate this question through a controlled evaluation of 16 proprietary and open-weight LLMs, focusing on how accuracy, contamination, translation, and answer formulation interact in multiple-choice benchmarks.

🔍 A simple intervention with strong effects

We introduce a minimal yet revealing modification: replacing the correct answer with “None of the other answers”, which becomes the new correct option.

This breaks surface-level question–answer associations and forces eliminative, multi-step reasoning: models must discard all alternatives before selecting the correct choice.

📉 What happens when recall is blocked?

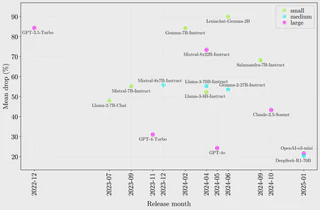

- Accuracy drops dramatically, ranging from 10% to 93% across models.

- These collapses expose major weaknesses in verification-based reasoning, even in systems with very high baseline accuracy.

- Several large and widely used models fall below chance level under this condition.

🌍 Generalization is not the problem

We further disentangle memorization from reasoning by evaluating models under two controlled dimensions:

Public vs. private data (MMLU vs. UNED-Access):

Models perform slightly better on the private dataset, suggesting that intrinsic task difficulty matters more than exposure. However, public benchmarks degrade more when recall is blocked, revealing partial contamination effects.Original vs. professionally translated questions (English/Spanish):

Cross-lingual differences are minimal. Both accuracy and robustness remain remarkably stable, indicating strong cross-lingual generalization.

📊 Accuracy ≠ robustness

Accuracy alone is a weak proxy for reasoning:

- Correlations between accuracy and robustness are moderate overall.

- Only in low-contamination settings does accuracy become a reliable indicator of genuine reasoning behavior.

🚀 What actually improves reasoning?

- Model size is not a reliable predictor of robustness.

- Instead, recent models explicitly trained for reasoning show substantially smaller drops.

- Training strategies and architectural choices matter more than parameter count.

✅ Takeaway

Standard leaderboard accuracy hides important gaps.

Current LLMs generalize well across datasets and languages, but still struggle with true eliminative reasoning.

Encouragingly, newer reasoning-oriented models show clear progress—suggesting that how we train models matters more than how big we make them.

👉 Read the full paper:

E. S. Salido, J. Gonzalo and G. Marco, “On the Limits of LLM Reasoning: Evidence From Contamination, Translation, and Answer Modification in Multiple-Choice Benchmarks,” in IEEE Access, vol. 14, pp. 9384-9393, 2026, doi: 10.1109/ACCESS.2026.3651579.

🔗 IEEE Xplore: https://ieeexplore.ieee.org/document/11333297